Setting up the test

It probably doesn’t come as a surprise, for presenting quantitative test results we need to define and set up the actual test. There are a ton of ways to approach gathering usability results. A way to gather quantitative data is questionnaires.

Measuring usability quantitatively

Usability questionnaires have gathered the attention of researchers for years. Almost whichever we end up picking it will result in some sort of scale (a number) from “completely unusable” to “completely usable”. Picking the best questionnaire is something that we will only slightly discuss here, as it deserves a post for itself. But to sum up, there are different questionnaires that try to work around an inevitable truth “one cannot measure everything with one question”.

The System Usability Scale is pretty accurate at identifying the usability of a given system, whether the 10 questions it needs to measure that are a lot, depends on the research context. UMUX-Lite just uses 2 questions, but lacks a bit in accuracy [2]. Other questionnaires, like Net Promoter Score, come with their own trade offs.

In this example we will use the System Usability Scale (SUS), as it is one of the most researched [1], and others usability questionnaires scores can be projected to its scale with good accuracy [2].

Understanding the optimal number of participants

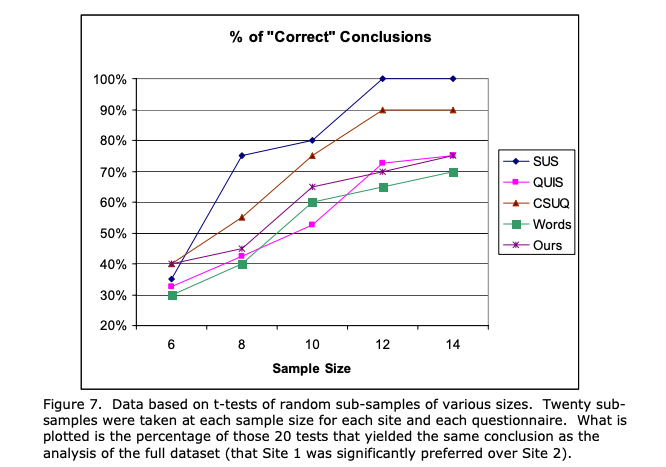

Depending on the questionnaire chosen, different guidelines exist. In case of SUS we can follow the diagram below .

Define goals

There is a relation of cost-to-accuracy in each usability test we want to perform. Getting from 75% to 100% requires 4 more users (50% increase in cost). At times, 75% percent would be enough; others 100% accuracy is needed. Still even if we use 75%, there are ways to present the information with 100% accuracy. More on that later.

Uncover limitations

Using a questionnaire to assess usability of a system doesn’t come without its pitfalls. A usability score doesn’t show where the problem lies. Scores are comparable between one system, and even if a system has way higher usability than another; the only comparison is about which of the two systems is more usable, not by how much. The size of the system plays a role as well, small systems tend to create stricter scores [4].

Presenting the results

So, we have defined the process we want to test and created the experiment (instructions, mockups, redirections, etc.). Found the participants, they have gone through it, and they have answered the questionnaire as well.

Presenting based on target accuracy

If the end accuracy is 100%, then presenting is straight forward. However, if the target accuracy was some other number below 100%, or we didn’t get enough participants (to reach 100%), let’s discuss two ways to present the results.

Present in ranges to improve accuracy

Eventually what a score with low accuracy means is: “we are not sure about the exact usability score, but it should be around x”. The problem that we want to solve then is, how to find the “how around x it is”.

To calculate that, let’s imagine that we wanted to achieve 100% accuracy in our SUS questionnaire, but we got 10 participants. We know that 10 participants resulted in 80% accuracy (see image above). The SUS score that we got is 63.5. So, “we are not sure about the exact usability score, but it should be around 63.5”.

In order to reach 100% accuracy we need 2 more people, in total 12. Let’s first examine the worst case scenario:

Two more participants appeared —magically— and they both rated the system as “completely unusable”, both giving a 0 out of 100 score.

One the bright side, the best case scenario would be:

Two more participants appeared and they both rated the system as “completely usable”, both giving a score of 100 out of 100.

In order to calculate SUS score with 100% accuracy, we just need to find out how much the score is in the worst case scenario and in the best case scenario. To calculate the worst case scenario score we:

- Calculate each participant’s score

- Add them all together

- Subtract by 12

That will give 53, in our example.

To calculate the worst case scenario score we:

- Calculate each participant’s score

- Add them all together and add (12 – 10) * 100

- Subtract by 12

That will give 70 in our example.

This can work regardless of the number of participants or target accuracy. Some examples:

If we have 9 participants and we want to figure out the range with 100% accuracy

Worst case scenario:

- Calculate each participant’s score

- Add them all together

- Subtract by 12

Best case scenario:

- Calculate each participant’s score

- Add them all together and add (12 – 9) * 100

- Subtract by 12

If we have 6 participants and we want to figure out the range with 80% accuracy

For 80% accuracy, we need 10 participants.

Worst case scenario:

- Calculate each participant’s score

- Add them all together

- Subtract by 10

Best case scenario:

- Calculate each participant’s score

- Add them all together and add (10 – 6) * 100

- Subtract by 12

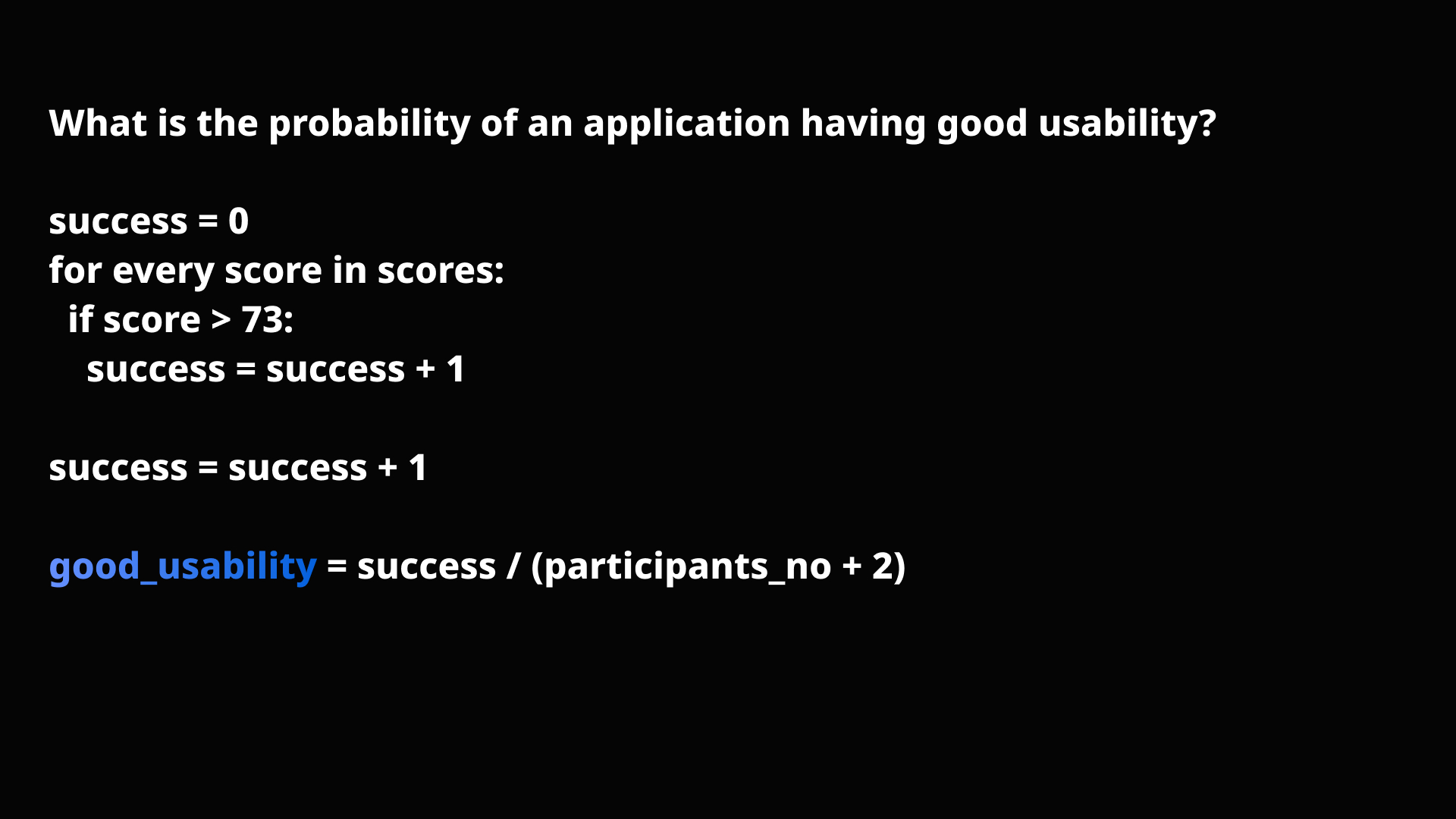

The Laplace’s Rule of Succession

Another way to present the results is answering the question “What is the probability that the next person, who will use the system, will rate it with at least good usability?”

Let’s try to answer that question:

For a system to have good usability that means that its SUS is more than 73. So:

- Go through all SUScores and assign as a “success” if the score is more than 73.

- Count the number of “successes” and add 1

- Divide that number by the total amount of participants plus two

To talk numbers, let’s say that we have these 10 scores:

| 78 | SUCCESS |

| 62,5 | FAIL |

| 36 | FAIL |

| 75 | SUCCESS |

| 83 | SUCCESS |

| 39 | FAIL |

| 67 | FAIL |

| 37 | FAIL |

| 78 | SUCCESS |

| 81 | SUCCESS |

5 of them are a success. That means the probability of the system to be rated with good usability is (5 + 1) / (10 + 2) = 50%.

With the same logic, the probability of the system to be rated with OK usability (SUS > 52) is 9 / 12 = 75%.

Discussion

Calculating ranges

Ranges are an inevitable truth of low accuracy. Essentially getting a score, let’s say 63, with an accuracy of 75% means uncertainty about the results. We are not sure that the actual usability of the system is 63, it can be 75, or 58, or something in between.

Now, a lot of times stakeholders will prefer a single number, rather than an interval. The interval may look like it doesn’t give that much information — what does it mean that the system has between 52 and 70 usability? At the same time, presenting a single number with a low accuracy contains the exact same (or the lack of) information. In a way, the interval is a visualisation of the accuracy.

How large the interval is, correlates with how uncertain we are. If we have great uncertainty, then the interval will be large. If we are very certain, then the interval will be very small. And of course, if we are 100% certain then the interval is the smallest possible —a single value.

We can leverage the visualisation of the accuracy (the interval) to get valuable information. Some insights that we might gather is whether the higher or lower end of the interval is acceptable or not. If the higher end is not (or marginally) acceptable, this suggests that we are dealing with a low usability system, even if we don’t know the exact number. If the lower end is acceptable, the system in question probably has good usability. The same apply with adjective ratings and grade scales.

Eventually someone may stumble upon the thought that not all scores are equally likely. For example, the likelihood of a participant giving a score of 0 when eight already gave an average of 63.5, is unlikely. Let alone 4 of them giving zeros. The same applies for 100.

This would be a correct statement. Indeed, the people who would have come in reality would most probably give scores close to the ones we already saw. This opens a whole new discussion, as mathematicians tried for years to understand and predict the behaviours of systems like this. Gradient noises, like Perlin noise, were introduced to create random data linked to the previous value. Then the discussion starts to include sensitivity of systems and extreme values, which we will leave for another time.

Talking about probabilities, there is something that we can calcualte…

The Laplace’s Rule of Succession

From the previous discussion a logical question that might arise is: “Okay, we know that the system has a relatively good usability, but how likely is it that the next person will find it actually good?”

Essentially, we can measure the probability of anything on the SUS scale:

- What is the probability the next person, who will use the system, will rate it with at least excellent/good/poor etc. ?

- What is the probability the next person, who will use the system, will rate it with at least being acceptable ?

- What is the likelihood of dealing with an A grade system?

- …

The method stays the same as we discussed before, and once again we are trying to express our uncertainty.

The probability of a specific question will be based on two factors:

- The number of participants. This is intuitive, the more participants we have the better we can calculate a probability. If we have the amount of participants for the target 100% accuracy, we don’t even need to calculate the probability; we are certain.

- If the assumption is at the lower end or the higher end of the interval. For example, if we try to find the probability of the system being at least poor, in the example with the 8 participants above, it will be 66%. The probability of it being at least the worst imaginable is 91%. This happens because of the simple fact that both the probability and the interval measure the same things —uncertainty.

If this whole discussion sparked your curiosity, 3Blue1Brown has a video that introduces these concepts with very good explanations, and more broad examples.

References

[1] https://uxpajournal.org/sus-a-retrospective/

[4] http://tecfa.unige.ch/tecfa/maltt/ergo/1415/UtopiaPeriode4/articles/JUS_Kortum_November_2013.pdf